|

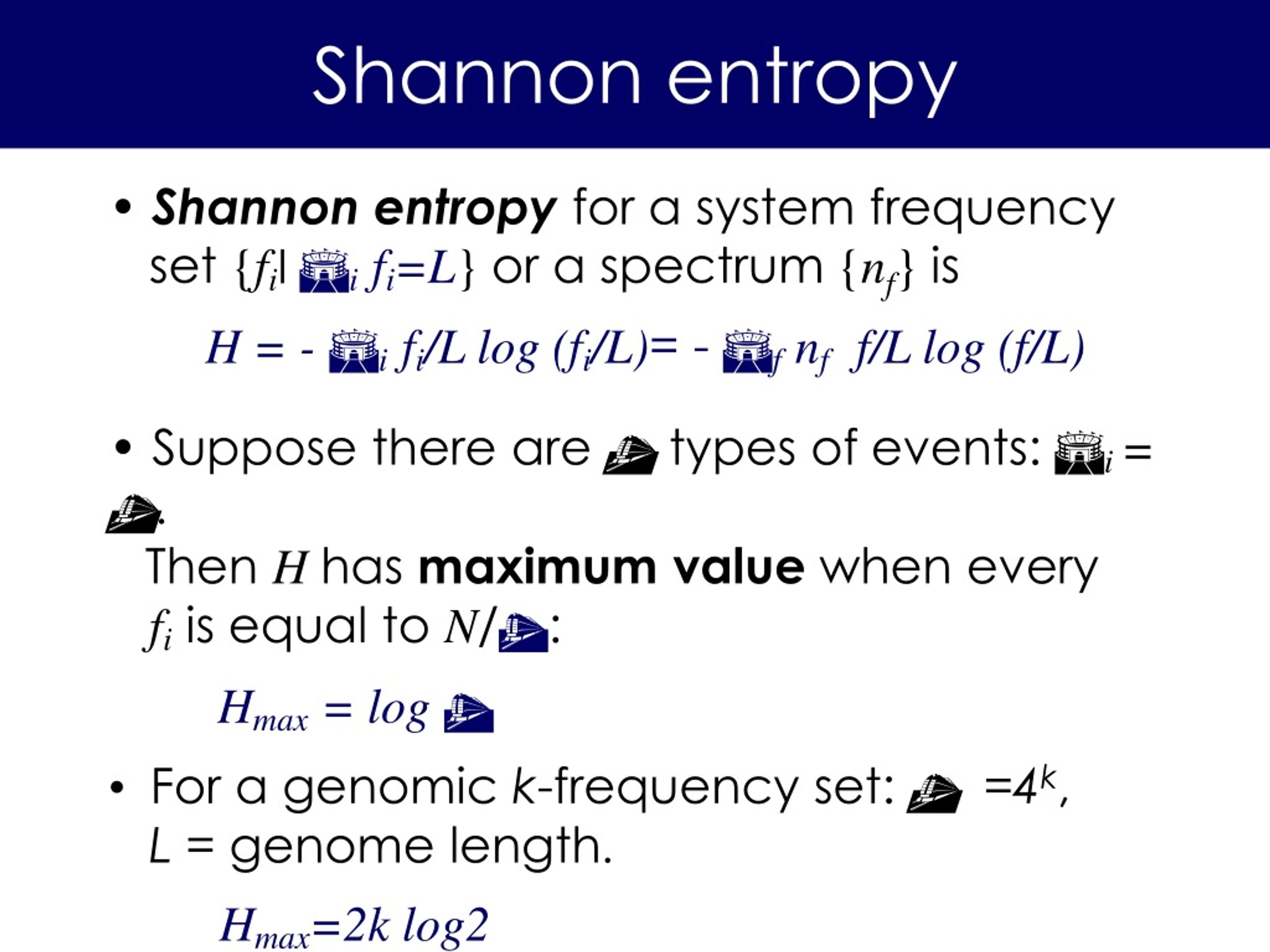

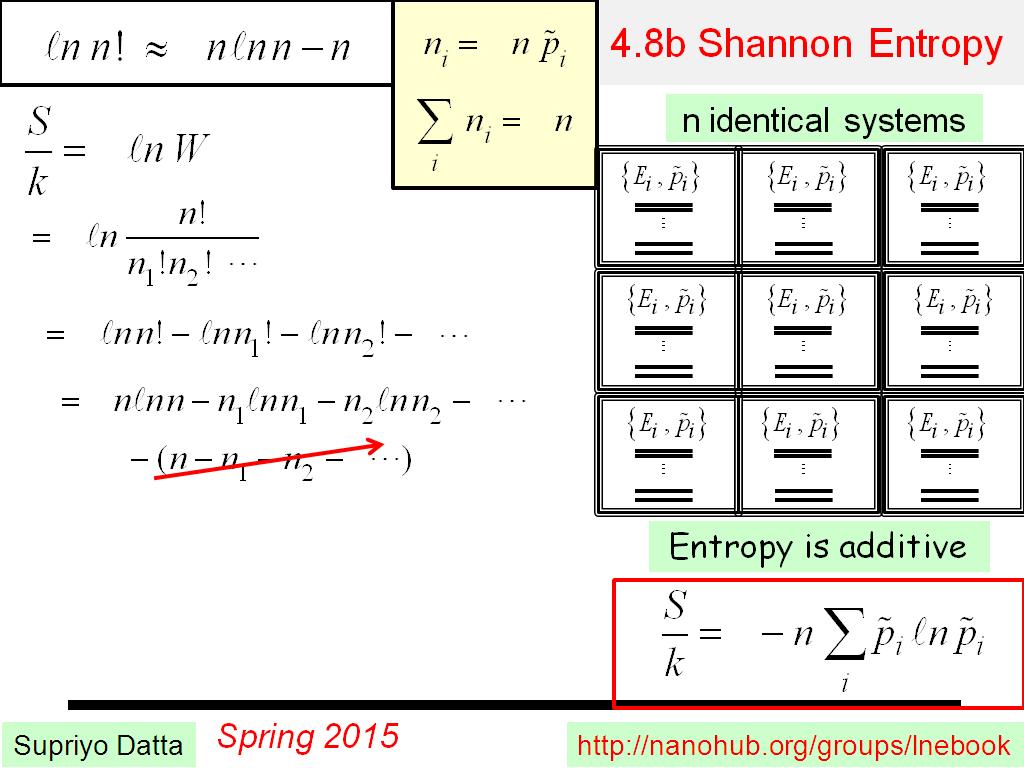

12/15/2023 0 Comments Shannon entropy Perhaps the most convincing evidence supporting the hypothesis thus far has come from the study undertaken by Tagliazucchi et al. found that after administration of the psychedelic psilocybin, the brain’s functional patterns undergo a dramatic change characterized by the appearance of many transient low-stability structures 13. have reported complex spatiotemporal cortical activation pattern during anesthesia with ketamine, which can induce vivid experiences (“ketamine dreams”) 12. Although until recently the entropy of the brain had never been directly measured, the entropic brain hypothesis is empirically supported by several recent studies. proposed a hypothesis known as the entropic brain, which holds that the stylized facts concerning altered states of consciousness induced by psychedelics can be partially explained in terms of higher entropy of the brain’s functional connectivity 11. During the last few years, new neuroimaging techniques, such as functional magnetic resonance imaging (fMRI) 1, 2, have allowed noninvasive investigation of global brain activity in a variety of conditions, e.g., under anaesthesia, sleep, coma, and in altered states of consciousness induced by psychedelic drugs 3, 4, 5, 6, 7, 8, 9, 10. Relatively little is known about how exactly psychedelics act on human functional brain networks. Finally, we discuss our findings in the context of descriptions of “mind-expansion” frequently seen in self-reports of users of psychedelic drugs. Our results are broadly consistent with the entropic brain hypothesis. We also find increased local and decreased global network integration. We report an increase in the Shannon entropy of the degree distribution of the networks subsequent to Ayahuasca ingestion. In this context, we use tools and concepts from the theory of complex networks to analyze resting state fMRI data of the brains of human subjects under two distinct conditions: (i) under ordinary waking state and (ii) in an altered state of consciousness induced by ingestion of Ayahuasca. Ayahuasca is a psychedelic beverage of Amazonian indigenous origin with legal status in Brazil in religious and scientific settings.

We hope this guide has been helpful.The entropic brain hypothesis holds that the key facts concerning psychedelics are partially explained in terms of increased entropy of the brain’s functional connectivity. So, you can use this measure to understand the structure and predictability of your data. Remember, the higher the Shannon entropy, the more uncertain or random the data is. This is a powerful tool in your data science toolkit, allowing you to quantify the uncertainty or randomness in your data. ConclusionĪnd there you have it! You’ve successfully calculated the Shannon entropy of an array using Python’s NumPy library. This formula calculates the sum of the product of the probability of each element and the base-2 logarithm of the probability of each element, and then negates the result. Getting Started with NumPyīefore we dive into the calculation of Shannon entropy, let’s ensure that you have NumPy installed. It’s an essential tool for any data scientist working with Python. It provides a high-performance multidimensional array object and tools for working with these arrays. NumPy is a powerful Python library for numerical computations. The higher the entropy, the more uncertain or random the data is. It’s widely used in information theory to quantify information, uncertainty, or randomness. Shannon entropy, named after Claude Shannon, is a measure of the uncertainty or randomness in a set of data. In this blog post, we’ll guide you through the process of calculating the Shannon entropy of an array using Python’s NumPy library.

One of the most common types of entropy used in data science is Shannon entropy. It’s a measure of uncertainty, randomness, or chaos in a set of data. In the world of data science, entropy is a crucial concept.

| Miscellaneous Calculating Shannon Entropy of an Array Using Python’s NumPy

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed